What steps should start-ups undertake to be fully compliant

1. Background: from concepts to compliance reality

The EU AI Act is no longer a future-facing instrument. It entered into force on 2 August 2024 and applies in stages. The general application date is 2 August 2026, but the prohibitions in Chapter II already apply from 2 February 2025, while the rules on general-purpose AI models, notified bodies, parts of the governance framework, and penalties apply from 2 August 2025. For startups, that means compliance cannot be treated as a single deadline exercise. The legal question is not merely whether the company will be ready by August 2026, but which obligations already apply to its products, models, and business structure today. The Regulation was adopted to create a uniform framework for the placing on the market, putting into service, and use of AI systems across the Union, while reducing fragmentation and protecting health, safety, and fundamental rights

2. Role allocation as the starting point of compliance

The AI Act uses the umbrella concept of “operator,” but it does not impose a uniform set of obligations on operators as a single category. Instead, it distributes obligations across distinct legal roles within the AI value chain, including provider, deployer, importer, distributor and other specifically defined actors. For startups, however, role-mapping cannot stop at the level of operator categories alone. The AI Act is also built around a second distinction of equal importance: the distinction between AI systems and general-purpose AI models. Compliance analysis must therefore address two questions in parallel: first, in what legal role the undertaking acts; and second, whether the relevant object of regulation is an AI system, a general-purpose AI model, or both.

This distinction matters because the AI Act does not treat AI systems and general-purpose AI models as a single regulatory category. AI systems are regulated primarily through a use- and risk-based framework, whereas general-purpose AI models are subject to a distinct regulatory layer. A startup may therefore be affected at the level of a system, a model, or both simultaneously.

For startups, the most important roles are usually provider and deployer. A startup is likely to be considered a provider if it develops an AI system and places it on the market or puts it into service under its own name or trademark. That is the role to which the core ex ante obligations of the AI Act are primarily attached. A startup is likely to qualify as a deployer where it uses an AI system under its own authority in the course of a professional activity. This distinction matters because the provider is usually the actor responsible for classification, documentation, conformity assessment, and broader lifecycle compliance, whereas the deployer’s obligations are more closely tied to the system’s actual use in practice.

The relevant test is functional rather than rhetorical. A startup should ask:

Who determines the intended purpose of the market-facing product?

Under whose name is it placed on the market or put into service?

Who controls the relevant design and compliance decisions?

And who uses the system in practice?

If the company controls the market-facing system and places it on the market or puts it into service under its own name or trademark, it will generally be acting as the provider of that system. If it uses a system within its own operations under its authority, it will generally be acting as a deployer in relation to that use. Importer or distributor status may also arise when a company brings a third-party system into the Union or makes it available on the market.

This is particularly important because, in certain circumstances, substantial modification or a change in intended purpose may also affect the allocation of roles under the Act.

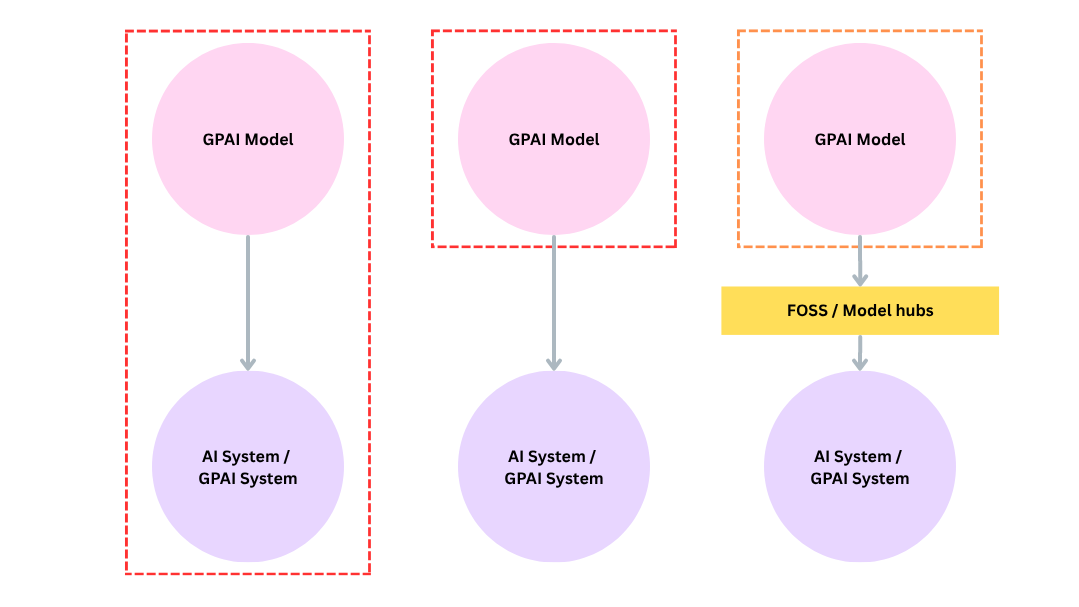

The importance of downstream providers becomes clear precisely where the two regulatory levels intersect. Many startups do not develop their own foundation or general-purpose AI models. Instead, they build products on top of third-party models. In that case, they may still qualify as the provider of the downstream AI system, even though they are not the provider of the underlying general-purpose AI model. This is one of the central structural assumptions of the AI Act: upstream model governance and downstream system governance are related but not the same.

That is why the Chapter V obligations imposed on providers of GPAI models include duties to prepare documentation and provide information to downstream providers. The point of those duties is not to shift compliance downstream in general, but to make downstream compliance possible when a startup integrates the model into a system that it then places on the market or puts into service for a specific intended purpose. Put differently, compliance at model level does not replace compliance at system level. It supports it. The provider of the model and the provider of the downstream system occupy different positions in the regulatory chain and may each bear distinct obligations.

This distinction is particularly important where a startup fine-tunes, adapts, packages, or commercialises a model for a defined use case. In such situations, the legally decisive object is often not the model in the abstract, but the AI system resulting from that integration and made available to users or customers for a defined intended purpose. The startup may therefore be neither a mere user of another company’s model nor the original model developer, yet still bear provider-level obligations for the downstream system. This question is becoming particularly important in practice, especially in relation to agentic and multi-agent systems, where the relationship between models, system components and market-facing functionality may be more difficult to delineate for the purposes of the AI Act.

A further difficulty is that roles under the AI Act are not mutually exclusive. The same undertaking may simultaneously be the provider of one AI system, the deployer of that system in its own operations, the distributor of another AI-enabled product, and, in some cases, the provider of a general-purpose AI model. The Act is therefore functional in structure: it allocates obligations by reference to the legally relevant activity performed in relation to a model or system, not by reference to a single stable corporate identity. The compliance consequence is that startups should not ask only what they are “in general” under the AI Act. They should ask which roles they perform across their products, models, supply chain and internal use cases. Where roles overlap, obligations may accumulate rather than collapse into one simplified package. A startup that places a downstream AI system on the market while also using that same system internally may need to assess both provider-level and deployer-level duties. Likewise, a company that places a GPAI model on the market and also commercialises a downstream AI system built on that model may be subject both to model-level duties under Chapter V and to system-level obligations under the general AI-system framework.

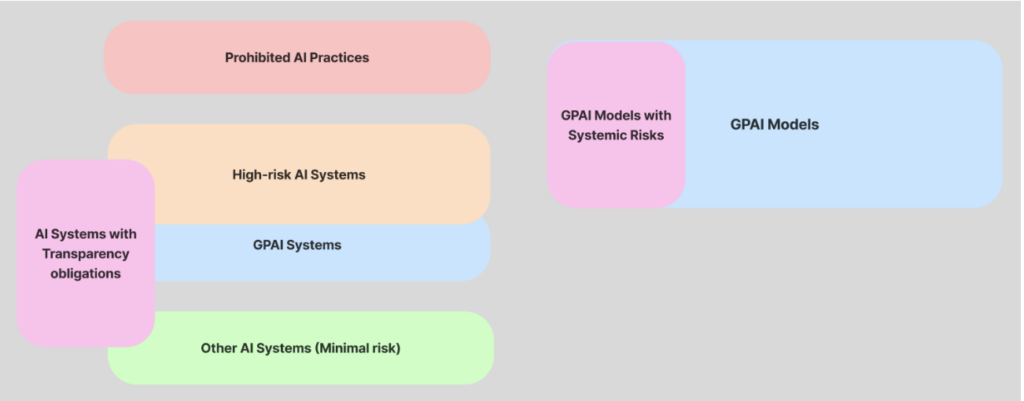

Once role allocation and the model/system distinction have been clarified, the decisive compliance issue becomes one of classification, especially at the level of AI systems. The AI Act’s main logic for systems is risk-based and use-case sensitive. It distinguishes between prohibited practices, high-risk systems, systems subject primarily to transparency obligations, and systems that remain outside the categories triggering the core ex ante compliance architecture. For startups, the central legal task is therefore not simply to determine whether their product “uses AI”, but to determine how the system should be classified in light of its intended purpose and operational context.

This is why the classification of an AI system is central to the compliance analysis. It determines whether the system falls within the prohibited practices listed in Article 5, qualifies as a high-risk AI system, or is subject to specific transparency obligations under the Act. At the level of general-purpose AI models, by contrast, the analysis is structured differently. The key question is not the classification of the model in the same sense as for AI systems, but whether the undertaking qualifies as a provider of a general-purpose AI model and, if so, whether the model is subject to the additional requirements applicable to general-purpose AI models with systemic risk.

For startups, the AI Act is better understood as a layered regulatory framework than as a single compliance checklist. The relevant questions are sequential.

First, in what legal capacity does the undertaking act under the AI Act?

Second, is the relevant regulatory object an AI system, a general-purpose AI model, or both?

Third, where the undertaking relies on a third-party general-purpose AI model, is it acting only as a deployer, or also as the provider of a downstream AI system built on that model?

Fourth, do several roles coincide within the same corporate structure or across the same product lifecycle?

Fifth, how should the relevant AI system be classified under the Act, in particular as a prohibited AI practice, a high-risk AI system, or a system subject to specific transparency obligations?

Sixth, which obligations are already applicable, and which will apply later under the Act’s staged timetable?

Only once those questions have been answered can the applicable compliance pathway under the Act be identified.

The same logic should guide the assessment of current implementation developments. The Commission’s Digital Omnibus initiative is presented as a package of targeted simplification measures designed to facilitate smoother and more proportionate application of selected parts of the AI Act, rather than to alter its underlying structure. At the same time, the practical application of the Act is expected to be supported by harmonised standards, which are intended to translate the Regulation’s general legal requirements into more concrete technical and organisational means of implementation.

In parallel, the implementation of the Act is also being shaped by complementary soft-law instruments. The Commission has issued guidelines on the definition of an AI system and on prohibited AI practices, intended to support the application of the first rules that became applicable in February 2025. In relation to Chapter V, the Commission has also issued guidance on the obligations of providers of general-purpose AI models, while the General-Purpose AI Code of Practice has been confirmed by the Commission and the AI Board as an adequate voluntary tool under Article 56. In relation to Chapter IV, the Commission is preparing further guidance and a voluntary code of practice on transparenсy of AI systems, including the marking and labelling of AI-generated content.

More Insights

More Insights